First, you will need to deal with the atmosphere which is most easily dealt with spectrally rather than RGB because scattering has simple wavelength based formulas. But you'll also have RGB texture for the moon, so I would use the lazy spectral method.

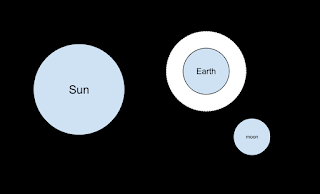

Here are the four spheres-- the atmosphere sphere and the Earth share the same center. Not to scale (speaking of which, choose sensible units like kilometers or miles, and I would advise making everything a double rather than float).

The atmosphere can be almost arbitrarily complicated but I would advice making it all Rayleigh scatterers and have constant density. You can also add more complicated mixtures and densities. To set the constant density just try to get the overall opacity about right. A random web search yields this image from Martin Chaplin:

This means something between 0.5 and 0.7 (which is probably good enough-- a constant atmospheric model is probably a bigger atmospheric limitation). In any case I would use the "collision" method where that atmosphere looks like a solid object to your software and exponential attenuation will be implicit.

For the Sun you'll need the spectral radiance for when a ray hits it. If you use the lazy binned RGB method and don't worry about absolute magnitudes because you'll tone map later anyway, you can eyeball the above graph and guess for [400-500,500-600-600-700]nm you can use [0.6,0.8,1.0]. If you want to maintain absolute units (not a bad idea-- good to do some unit tests on things like luminance of the moon or sky). Data for the sun is available lots of places but be careful to make sure it is spectral radiance or convert it to that (radiometry is a pain).

For the moon you will need a BRDF and a texture to modulate it. For a first pass use Lambertian but that will not give you the nice constant color moon. This paper by Yapo and Culter has some great moon renderings and they use the BRDF that Jensen et al. suggest:

Texture maps for the moon, again from a quick google search are here.

The Earth you can make black or give it a texture if you want Earth shine. I ignore atmospheric refraction -- see Yapo and Cutler for more on that.

For a path tracer with a collision method as I prefer, and implicit shadow rays (so the sun directions are more likely to be sampled but all rays are just scattered rays) the program would look something like this:

For each pixel

For each viewing ray choose random wavelength

send into the (moon, atmosphere, earth, sun) list of spheres

if hit scatter according to pdf (simplest would be half isotropic and half to sun)

The most complicated object above would be the atmosphere sphere where the probability of hitting per unit length would be proportional to (1/lambda^4). I would make the Rayleigh scattering isotropic just for simplicity, but using the real phase function isn't that much harder.

The picture below from this paper was generated using the techniques described above with no moon-- just the atmosphere.

There-- brute force is great-- get the computer to do the work (note, I already thought that way before I joined a hardware company).

If you generate any pictures, please tweet them to me!